Most company websites are built the same way. A brief goes to an agency or an internal team. Weeks of wireframes, rounds of feedback, a CMS gets bolted on, and eventually something ships. Updates take days. Costs stack up. Nobody is thrilled with it.

It is easy to spend all your time consulting for clients on the right way to do things while your own tech takes second seat to customer demands. This time, we decided to dogfood it.

“You can’t advise on this stuff unless you have lived experience. Theory only gets you so far.” — Ed Marshall, CTO

We rebuilt enablis.co almost entirely with AI, using Claude Code as the primary engineering tool. Not just for generating copy or tweaking CSS, but for the entire build: page creation, CI pipelines, deployment workflows, accessibility testing, SEO auditing, and ongoing content changes.

The result is a slicker, more engaging site that actually represents who we are. It loads faster, scores significantly better for SEO, costs under £20 a month to run (down from around £600), and treats AI agents as a considered consumer of content alongside human visitors. We think that last point is key for the future of how people and machines discover businesses online.

This is not a victory lap. It is a practical breakdown of what we did, why it worked, and what we would recommend if you are thinking about doing something similar.

Why we rebuilt

Our old site ran on WordPress. It worked, but it had problems that most marketing teams will recognise. WordPress is fine for a quick text change, but any meaningful change (new page types, layout tweaks, integration work) required developer time or careful configuration. The hosting was expensive for what we got. Performance was acceptable but not exceptional. And the site looked like every other UK technology consultancy, with generic messaging that did not reflect how we actually work.

We wanted something that matched our positioning as an AI-native consultancy. If we are advising clients on AI adoption, our own operations should reflect that. A static marketing site felt like the right proving ground: bounded scope, clear success criteria, and a real audience.

The stack

We chose Astro as the framework. Astro is purpose-built for content-driven sites and static serving, and it is remarkably lightweight compared to heavier alternatives like React or Next.js. It generates static HTML at build time with zero client-side JavaScript by default. That matters for performance. Our pages load fast because there is no framework bundle to download and parse. All interactivity (smooth scrolling with Lenis, magnetic button effects with GSAP, mobile menus) is written in vanilla TypeScript inside <script> tags within Astro components. No React. No Vue. No hydration overhead.

For hosting, we use AWS S3 with CloudFront as a CDN. A static site served from S3 costs almost nothing. The monthly bill is a few pounds. Compare that to a managed WordPress host with staging environments, plugin licences, database backups, and security patches. The old setup ran to roughly £600 per month. The new one costs under £20, and most of that is the domain and email forwarding.

The CI/CD pipeline runs on GitHub Actions. Every pull request triggers a build, then three parallel checks: internal link validation (linkinator), WCAG 2.1 AA accessibility testing (Playwright with axe-core across 25 pages), and Lighthouse CI with enforced thresholds for performance, accessibility, best practices, and SEO. PR previews deploy automatically to a temporary URL and a notification drops into our marketing team’s private Slack channel so they can see changes in context before anything merges. Once the PR is merged, the preview is scrubbed.

How Claude Code built it

This is where it gets interesting. We built the site primarily through conversation with Claude Code. Not by dictating pixel-perfect specs, but by describing intent: “Create a new case study page following the existing layout pattern” or “Add a job detail template that pulls from the jobs data file.” The AI reads the codebase, understands the architecture, and writes code that fits.

The key was establishing a strong foundation for the AI to work from. We wrote an extensive CLAUDE.md file that sits in the project root. Think of it as an operating manual for the AI. It includes:

- Component inventory: Every UI component listed with its purpose, so the AI uses existing components rather than creating duplicates

- Brand enforcement rules: Approved colour palette, gradient usage limits, typography rules, button shapes. No ad-hoc hex values. No gradients on buttons. Diagonal corner CTAs only.

- Copy tone guidelines: Active voice, max 3 sentences per paragraph, banned terms (no “synergy”, no “paradigm”), specific CTA formula

- SEO standards: Title length limits, meta description requirements, heading hierarchy rules, structured data schemas per page type

- Architecture patterns: How interactivity works (vanilla TypeScript, not React), how scroll animations are handled (single global IntersectionObserver), how paths resolve

CLAUDE.md is one of several primitives available in Claude Code that shape how input and output work together. It is not the only thing that matters, but it is a powerful one. A well-written instruction file means the AI produces on-brand, architecturally consistent code from the first attempt. It is also invaluable for team alignment. When multiple people are working with the AI across different tasks, the CLAUDE.md ensures everyone gets consistent output that follows the same conventions. Without it, you spend more time reviewing and correcting than you save.

Custom skills and automation

Beyond CLAUDE.md, we built custom slash commands (skills) that encode our quality standards directly into the development workflow:

/pre-pr runs a full validation suite before any pull request can be created. It builds the site, runs type checking, validates internal links, tests accessibility across all pages, runs Lighthouse against our score thresholds, checks meta tags on modified pages, validates heading hierarchy, and verifies new pages are registered for accessibility testing. The output is a structured report with a GO or NO-GO verdict.

/brand-review audits changed files against our brand guidelines. It checks for unapproved colours, gradient misuse, typography violations, incorrect button shapes, banned copy terms, passive voice in headings, and unfiltered photography. Every rule from the brand guide is encoded as an automated check.

/seo-audit performs a comprehensive SEO analysis on the built HTML or a live URL. It checks meta tags, Open Graph tags, Twitter cards, structured data, heading hierarchy, image attributes, internal links, sitemap inclusion, and canonical URLs. Each issue is categorised as critical or recommended.

/scaffold generates new pages from templates. It creates case study pages with the full prop interface pre-filled, or insight articles with the correct frontmatter schema. No blank-file guesswork.

We also use a hook that gates PR creation. A shell script runs before any gh pr create command. If the branch includes changes to source files and /pre-pr has not passed for the current commit, the hook blocks the PR. This means quality checks are not optional. They are enforced at the tool level, before code ever reaches the pipeline.

The practical effect is that engineers can make changes through natural language, on the fly, from anywhere. Describe what you need, the AI writes the code, the pipeline validates it, and a preview link lands in Slack. What would normally take days of back-and-forth becomes a real-time cycle of describe, generate, review, merge. The team were reviewing live preview links within minutes of a request.

Making it accessible to the whole team

A site that only engineers can update has the same bottleneck as a CMS that only developers can configure. We wanted marketing and operations people to make changes without touching code.

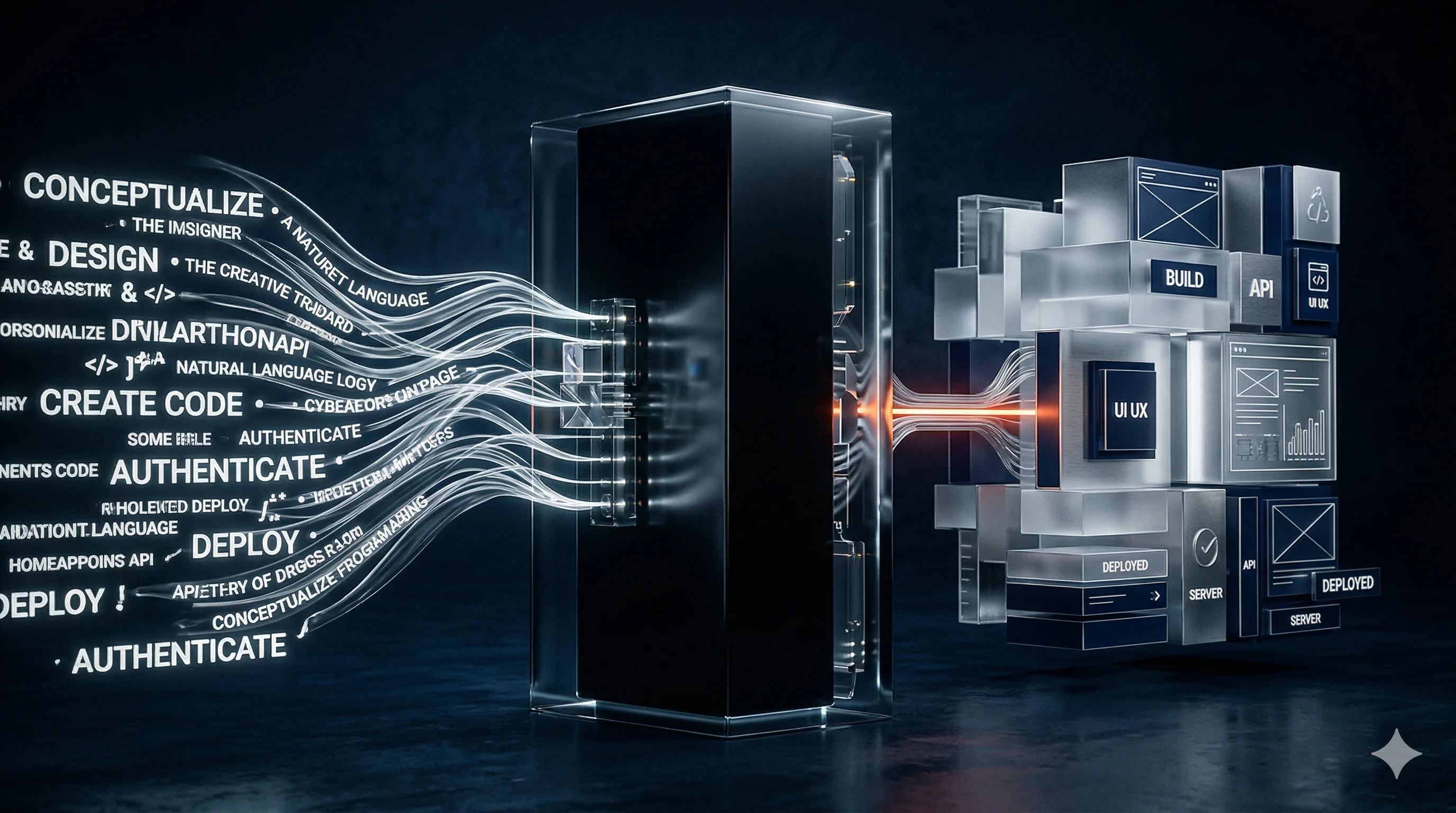

This is where Fusion comes in. Fusion is our AI orchestration platform, built around agentic AI. The tagline is “Ideas in. Outcomes out.” and that describes it well. Fusion lets non-technical team members write a brief describing what they want, in plain English. The platform’s agent reads the codebase, understands the architecture, produces a step-by-step plan for approval, then executes the changes. The result is a pull request on GitHub with a clean diff, a preview URL for review, and the originating ticket updated automatically. No git commands. No code editor. No CMS admin panel.

Fusion is not specific to this website. It is a general-purpose platform that connects to any codebase, any project management tool (Jira, Linear, GitHub Issues), and any MCP-compatible service. But the Enablis website was one of its first real applications, and it proved the model. Product owners and marketing team members can describe a change, approve the approach, review the preview, and ship, all without touching code.

We will be writing a dedicated piece on what we have learned building Fusion and what it can do later this year. For now, the short version is that it changes who can contribute to a codebase, and that has implications well beyond marketing websites.

The CMS is dead for us. Or more accurately, the CMS has been replaced by something that understands the full codebase and can make changes that a traditional CMS could never support. Want to update the copy on the careers page? Describe it. Want to add a new case study? Describe it. Want to change the colour of a button? Describe it. The AI writes the code, the pipeline validates it, and the change goes live.

This matters because the cost of change drives how often you change. When updates are expensive (developer time, CMS complexity, deployment risk), you update less. When updates are nearly free and near-instant, you update more. And a website that gets updated regularly is a website that performs better.

Building for AI discovery

Traditional SEO optimises for Google’s crawlers. That is still important, but it is not the whole picture anymore. AI-powered search (ChatGPT, Perplexity, Claude, Gemini) is growing, and these systems consume web content differently.

We built two things to address this:

llms.txt is a plain-text file served at the site root. It gives AI systems a structured, readable summary of who we are, what we do, and where to find key pages. Think of it as robots.txt for AI comprehension. Ours lists our services, case studies, and company information in a format optimised for LLM consumption.

WebMCP (Model Context Protocol for the web) is a structured manifest at /.well-known/webmcp that describes our site’s pages, their intents, and user flows. It lets AI agents understand not just what pages exist, but what they are for: browsing jobs, submitting a contact request, reviewing case studies. As Datadog’s engineering team put it at the MCP Developer Summit, the new mandate is to “expose data and functionality through agent-friendly interfaces.”

We use the /seo-audit skill to continuously check that our SEO fundamentals are correct: meta tags, Open Graph, structured data (ProfessionalService, BlogPosting, BreadcrumbList schemas), heading hierarchy, image attributes, and canonical URLs. Every page gets audited before it ships.

The numbers

Here is what the change looks like in practice:

- Hosting cost: ~£600/month (WordPress) to under £20/month (S3 + CloudFront)

- Time to publish a content update: Days (old process) to minutes (new process)

- Build and deploy time: Under 2 minutes from merge to live

- Lighthouse scores: Performance 85+, Accessibility 95+, Best Practices 90+, SEO 95+

- Accessibility coverage: WCAG 2.1 AA automated testing across 25 pages on every PR

- Total commits during build: 2,200+ over 8 weeks

The numbers matter, but they are not the whole story. Fundamentally, we have a site we are happier with. We have had more ability to directly shape it, to make it reflect what Enablis stands for and where we are heading as we continue to evolve alongside the AI revolution that our industry finds itself in. When you remove the friction from publishing, the site becomes a living asset rather than a static brochure that gets a refresh every two years.

What we learned

Start with the instruction file, not the code. The CLAUDE.md is worth more than any individual component. Invest time in writing clear, specific rules and the AI will follow them consistently. Vague instructions produce vague results.

Prototype relentlessly. As everyone working with AI has discovered, the cost of trying something out is dramatically lower than it used to be. We went through numerous visual iterations before landing on what you see now. Some worked, some did not. The point is that discarding an approach and trying another costs minutes, not days. Use that to your advantage.

Encode quality standards as automated checks. Humans forget. Automation does not. Every brand rule, SEO requirement, and accessibility standard should be a check that runs before code ships. Our custom skills (/pre-pr, /brand-review, /seo-audit) catch issues that would otherwise reach production.

Use hooks for enforcement, not goodwill. The pre-PR hook that blocks PR creation unless validation passes is a small script, but it changes behaviour. Quality gates work when they cannot be skipped.

Put it in front of people you trust. This one has nothing to do with AI. We are fortunate to have people close to the business whose opinions we really value. We put the site in front of them throughout the build and gained honest feedback that shaped the final product. No amount of automated testing replaces a trusted person telling you that something does not feel right.

Give non-engineers a path to contribute. The technical team should not be the bottleneck for content changes. Natural language editing through Fusion means our marketing team can update the site without filing tickets and waiting.

Build for the next generation of search. llms.txt and WebMCP are small investments that position your site for AI-driven discovery. The sites that do this now will have an advantage as AI search grows.

Static is not a limitation. Zero client-side framework means faster load times, lower hosting costs, and simpler security posture. We have magnetic buttons, smooth scrolling, scroll-reveal animations, and a dark-mode toggle, all without shipping a framework bundle. Vanilla TypeScript in Astro’s <script> tags handles everything.

Should you do this?

If you are running a marketing site on an expensive CMS and your team is frustrated by the speed of updates, yes. The combination of static site generation, AI-assisted development, and natural language editing is genuinely better for most marketing sites. It is cheaper, faster, more secure, and gives you more control.

The caveat is that you need someone who understands the principles, even if the AI writes the code. AI does not replace design thinking or content strategy. It replaces the mechanical work of translating those decisions into code. You still need someone who knows what good looks like and can review what the AI produces.

For us, this project was a proof point. We advise clients on AI adoption every day. Building our own site this way forced us to live with the same tools, the same workflows, and the same trade-offs we recommend. The lessons are real because the project is real.

The site you are reading this on was built this way. Every page, every component, every pipeline check. It costs us less than a round of drinks to run each month, and any member of the team can update it by describing what they want in plain English.

That feels like progress worth sharing.